I sometimes miss the particular discomfort of staring at a paragraph for far too long, knowing something mattered but not yet knowing what it was. It felt slow, frustrating, and vaguely pointless. But over time, that discomfort changed how I think.

Imagine a world where students can produce essays, summaries, and arguments without ever sitting with that kind of confusion, without wrestling with a single paragraph, and without pausing to decide what really matters. That world is already here. AI can generate answers, but it cannot make someone think, struggle, or grow in the ways university work used to demand.

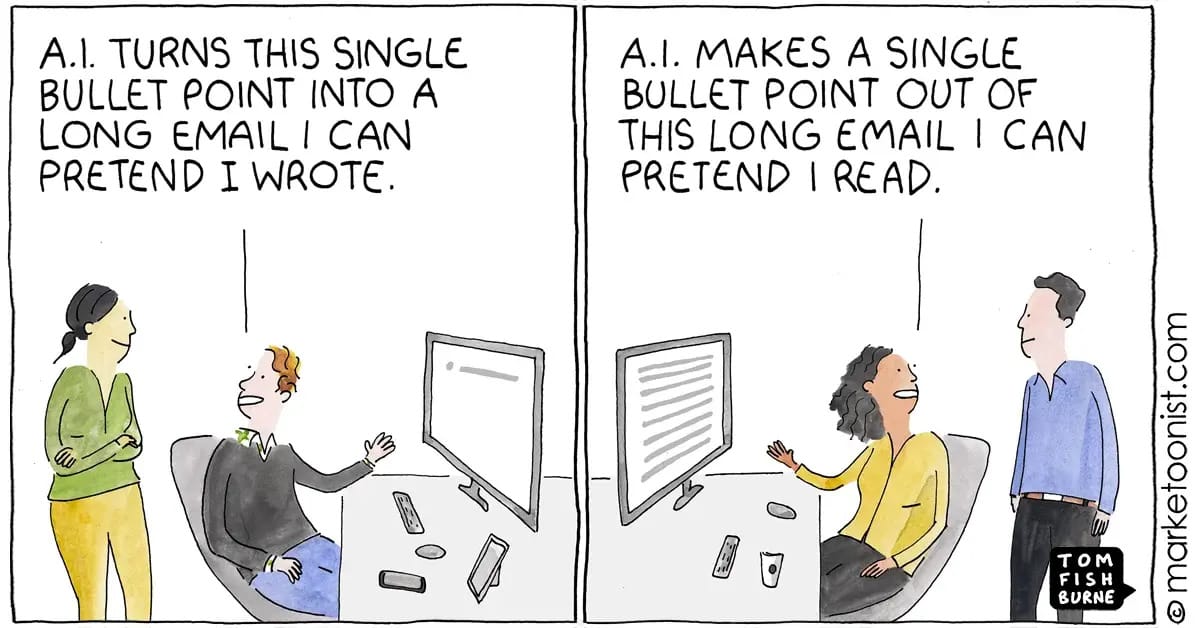

Most of us already know this. Students are using AI. By now, seeing it in an essay or a project is as ordinary as noticing someone using Google to look something up. It is part of the workflow, quietly reshaping what learning looks like, often without us noticing the deeper consequences.

That quiet shift is worth paying attention to. If producing polished outputs is no longer a reliable signal of deep thinking, then what are students actually learning? More importantly, what should they be learning to strengthen the uniquely human part of academic work?

LLMs like ChatGPT have become part of everyday workflows. People use them to outline essays, polish writing, or get unstuck when thinking feels impossible. This is what humans naturally do when a tool reduces friction or saves mental energy.

These tools are impressive and genuinely helpful. They reduce the tedium of writing and help people move forward when they feel stuck. This is not a post about cheating or the collapse of education. It is about a quieter shift in what university work actually does for the people doing it.

Food for thought

Many of the tasks we assign are now things AI does very well: summarising papers, comparing theories, constructing arguments. The tasks themselves have not become meaningless, but the experience of doing them has changed.

Previously, even uninteresting assignments forced students to decide what mattered in a text, organise their thoughts, and sit with uncertainty until clarity emerged. That process built intellectual muscle over time. The task was not just producing an essay but rather becoming the kind of person who could decide what mattered.

Now, it is possible to hand off that first pass to AI and still produce something that looks acceptable. People will naturally take the easier route. That is not surprising as tools change the work we do. Calculators, reference managers, and search engines all reshaped how we approach learning. AI is the just latest example. The difference is how much of the thinking process it can quietly absorb.

Rethinking what students should learn

Students will use AI whether we like it or not. The question is what we want them to gain when polished work is no longer a reliable signal of thinking deeply.

Perhaps degrees will come to signal more than the ability to produce tidy outputs. We might shift what we value in student work: less focus on the final product and more attention to the process, including drafts, conversations, and moments of uncertainty. The final essay should not pretend to capture all the thinking behind it.

Protecting human agency

Here is the central point: AI can produce answers, but it cannot make students think. It cannot make them wrestle with uncertainty, weigh what matters, or develop confidence in their own judgment. That part is human, and it is the part of university work that we should protect and strengthen.

The work we do as educators and learners should focus less on producing flawless essays and more on creating space for students to exercise agency: to make choices, to argue with themselves, and to struggle and succeed in ways that AI cannot replicate. That is the skill that remains uniquely human.

If nothing else, we can probably all admit that the hardest part of academic life is not the technology. It is the deadlines, the pressure, and the challenge of showing up fully as a thinker when it would be easier not to.

Take home message

What worries me is not that students will use AI. What worries me is that fewer of them will ever be asked to sit in the uncomfortable space where thinking actually happens. The challenge now is not to stop students using AI. It is to redesign learning so that the most valuable parts of education are the parts that cannot be automated away.

Questions to consider

If AI can handle the first pass of thinking, what should students focus on instead?

How can assessment emphasise process, judgment, and reasoning rather than polished outputs?

Which uniquely human skills do we want graduates to carry forward in an AI-rich world?

Worth reading

If you’re interested in how these questions play out at an institutional level, I recently wrote for Times Higher Education about how universities are navigating structural shifts in the AI era.

This piece was also shaped by conversations with Dr Karen Koza.

Stay up-to date

Whether you prefer learning at your own pace or working closely with my dedicated team of experts, I offer support to help you plan, structure, and elevate your research journey. Learn more and find the right option for you at ResHub.